DeepSeek V3-0324 is an updated checkpoint of the DeepSeek V3 model, with its release date, March 24, 2025, embedded in its name. Early discussions suggest improvements in coding capabilities and complex reasoning, as noted in recent articles. The model is available on GitHub DeepSeek-V3 GitHub and Hugging Face DeepSeek-V3-0324 Hugging Face, reflecting its open-source nature and accessibility.

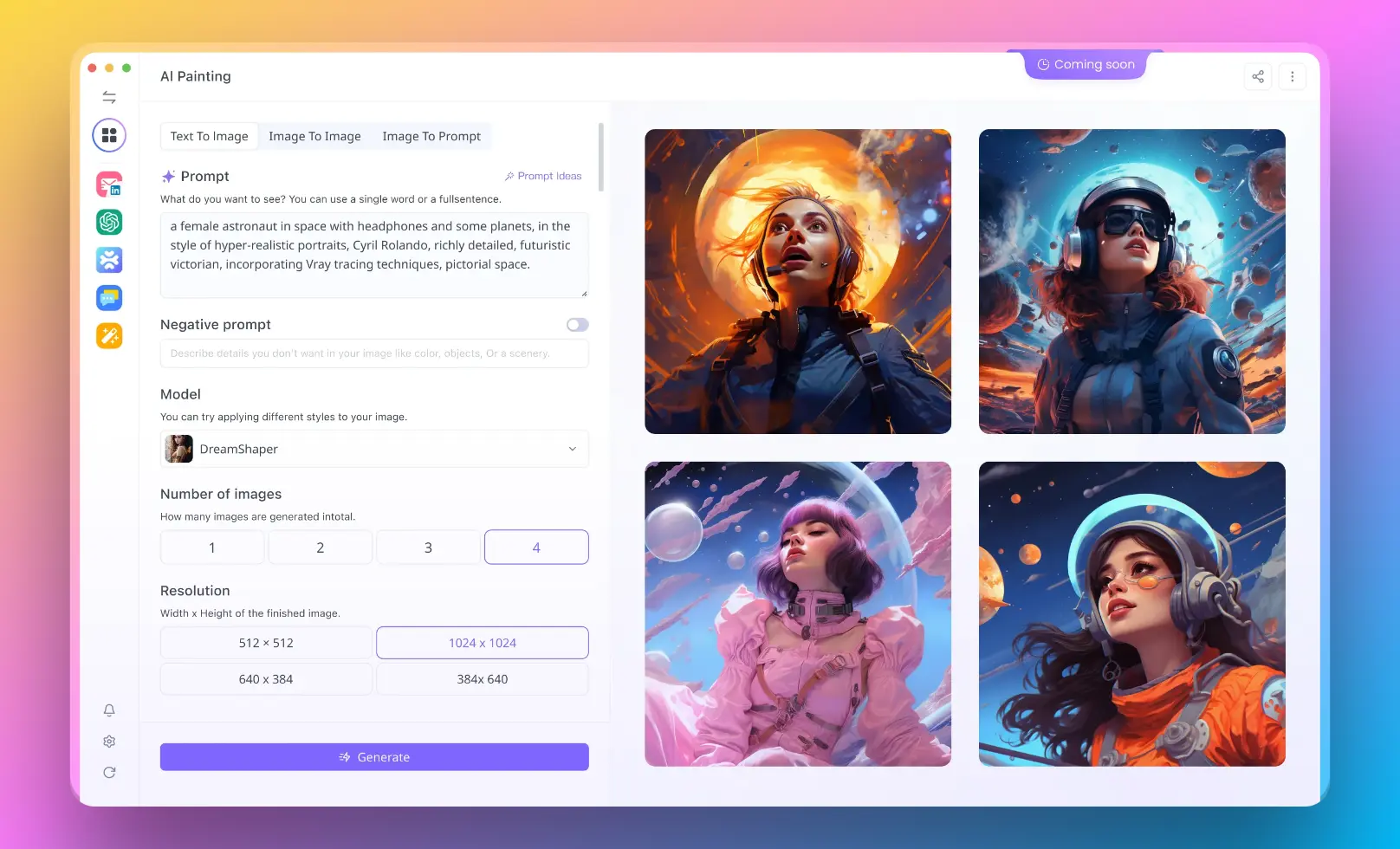

Use Anakin AI Now! Anakin AI is the All-in-One Platform that has Claude 3.7 Sonnet Thinking, o1-Pro, Google Gemini 2.0, FLUX AI Image Generation, Minimax AI Video Generation, and much more in One Palce!

Introduction to DeepSeek V3-0324

DeepSeek V3-0324 is a cutting-edge open-source language model developed by DeepSeek AI, released on March 24, 2025. This model is an updated version of the earlier DeepSeek V3, known for its large scale and efficiency. With 671 billion total parameters and only 37 billion activated per token, it leverages advanced architectures to handle complex tasks like coding, reasoning, and multilingual processing. This article explores its architecture, training, performance, and potential, offering insights for those interested in AI advancements.

Model Architecture of DeepSeek V3-0324

DeepSeek V3-0324 employs a Mixture-of-Experts (MoE) approach, where multiple expert networks specialize in different data aspects. This allows for a massive 671 billion parameters, with only 37 billion active per token, enhancing efficiency. Multi-head Latent Attention (MLA) compresses key and value vectors, reducing memory use and speeding up inference, especially for long contexts. The DeepSeekMoE architecture, a refined MoE variant, ensures load balancing without additional loss terms, stabilizing training. Additionally, the Multi-Token Prediction (MTP) objective predicts multiple future tokens, densifying training signals and enabling faster generation through speculative decoding.

Then, You cannot miss out Anakin AI!

Anakin AI is an all-in-one platform for all your workflow automation, create powerful AI App with an easy-to-use No Code App Builder, with Deepseek, OpenAI's o3-mini-high, Claude 3.7 Sonnet, FLUX, Minimax Video, Hunyuan...

Build Your Dream AI App within minutes, not weeks with Anakin AI!

The model was pre-trained on 14.8 trillion high-quality, diverse tokens, covering mathematics, programming, and multiple languages. It uses FP8 mixed precision for efficiency, reducing training costs and time compared to traditional methods. Post-training includes supervised fine-tuning with 1.5 million instances across domains, enhanced by reinforcement learning, refining capabilities like reasoning and code generation. This process, costing 2.788 million H800 GPU hours, underscores its cost-effectiveness.

Performance and Evaluation of DeepSeek V3-0324

DeepSeek V3-0324 excels in various benchmarks, particularly in coding and reasoning. It achieves 65.2% on HumanEval for code generation and 89.3% on GSM8K for math, outperforming many open-source models. In post-training, it scores 88.5% on MMLU and 70.0% on AlpacaEval 2.0, competing with closed-source models like GPT-4o and Claude-3.5-Sonnet. Its ability to handle a 128K context window and achieve 1.8 times Tokens Per Second (TPS) via MTP highlights its practical efficiency.

This survey note provides a detailed examination of DeepSeek V3-0324, an open-source language model released by DeepSeek AI on March 24, 2025. It builds on the original DeepSeek V3, released earlier, and is noted for its advancements in coding and reasoning tasks. The following sections delve into its architecture, training, evaluation, and future implications, offering a thorough analysis for AI researchers and enthusiasts.

Background and Release

Model Architecture

The architecture of DeepSeek V3-0324 is rooted in the Mixture-of-Experts (MoE) framework, with 671 billion total parameters and 37 billion activated per token. This design, detailed in the technical report, allows for efficient computation by activating only a subset of experts per token. Multi-head Latent Attention (MLA), as described in the report, compresses key and value vectors to reduce KV cache, enhancing inference speed. The DeepSeekMoE architecture, with 61 transformer layers and 256 routed experts per MoE layer, includes an auxiliary-loss-free load balancing strategy, ensuring stable training without additional loss terms. The Multi-Token Prediction (MTP) objective, predicting one additional token (D=1), densifies training signals and supports speculative decoding, achieving 1.8 times Tokens Per Second (TPS) during inference.

| Architecture Component | Details |

|---|---|

| Total Parameters | 671B, with 37B activated per token |

| MLA | Compresses KV cache, embedding dimension 7168, 128 heads, per-head 128 |

| DeepSeekMoE | 61 layers, 1 shared expert, 256 routed, 8 activated per token |

| MTP Objective | Predicts next 2 tokens, loss weight 0.3 initially, then 0.1, D=1 |

Training Process

Training involved pre-training on 14.8 trillion tokens, enhanced with mathematical, programming, and multilingual samples. The data construction refined redundancy minimization and used document packing without cross-sample attention masking, alongside a Fill-in-Middle (FIM) strategy at 0.1 rate via Prefix-Suffix-Middle (PSM). The tokenizer, a byte-level BPE with 128K tokens, was modified for multilingual efficiency. FP8 mixed precision training, validated on large scales, reduced costs, with 2.664 million H800 GPU hours for pre-training, totaling 2.788 million for full training, costing an estimated $5.576 million at $2 per GPU hour. Post-training included supervised fine-tuning on 1.5 million instances, with data from DeepSeek-R1 for reasoning and DeepSeek-V2.5 for non-reasoning, verified by humans, followed by reinforcement learning.

| Training Aspect | Details |

|---|---|

| Pre-training Tokens | 14.8T, diverse and high-quality |

| Precision | FP8 mixed, tile-wise for activations, block-wise for weights |

| Post-training Data | 1.5M instances, SFT and RL, domains include reasoning and code |

| GPU Hours | 2.788M H800, total cost $5.576M at $2/GPU hour |

Evaluation and Performance

Evaluation results, as per the technical report, show DeepSeek V3-0324's prowess across benchmarks. Pre-training evaluations include:

| Benchmark | Metric | Result | Comparison |

|---|---|---|---|

| BBH | 3-shot EM | 87.5% | Outperforms Qwen2.5 72B (79.8%), LLaMA-3.1 405B (82.9%) |

| MMLU | 5-shot EM | 87.1% | Beats DeepSeek-V2 Base (78.4%), close to Qwen2.5 (85.0%) |

| HumanEval | 0-shot P@1 | 65.2% | Surpasses LLaMA-3.1 405B (54.9%), Qwen2.5 72B (53.0%) |

| GSM8K | 8-shot EM | 89.3% | Better than Qwen2.5 72B (88.3%), LLaMA-3.1 405B (83.5%) |

Post-training, the chat model excels with 88.5% on MMLU, 70.0% on AlpacaEval 2.0, and over 86% win rate on Arena-Hard against GPT-4-0314, competing with closed-source models like GPT-4o and Claude-3.5-Sonnet. Its 128K context window and MTP-enabled 1.8x TPS highlight practical efficiency, with early discussions noting improved coding abilities compared to previous versions.

Applications and Future Directions

DeepSeek V3-0324's capabilities suggest applications in automated coding, advanced reasoning systems, and multilingual chatbots. Its open-source nature, under MIT license for code, supports commercial use, fostering community contributions. Future directions may include refining architectures for infinite context, enhancing data quality, and exploring comprehensive evaluation methods, as suggested in the conclusion of the technical report.

Conclusion

DeepSeek V3-0324 stands as a significant advancement in open-source AI, bridging gaps with closed-source models. Its efficient architecture, extensive training, and strong performance position it as a leader, with potential to drive further innovations in natural language processing.